Modern Datalake Architecture

In the

Prerequisites

To complete this tutorial, you’ll need to get set up with some software. Here's a breakdown of what you'll need:

-

Docker Engine : This powerful tool allows you to package and run applications in standardized software units called containers. -

Docker Compose : This acts as an orchestrator, simplifying the management of multi-container applications. It helps define and run complex applications with ease.

Installation: If you're starting fresh, the

Once you've installed Docker Desktop or the combination of Docker and Docker Compose, you can verify their presence by running the following command in your terminal:

docker-compose --version

You’ll also need a SingleStore license, which you can get

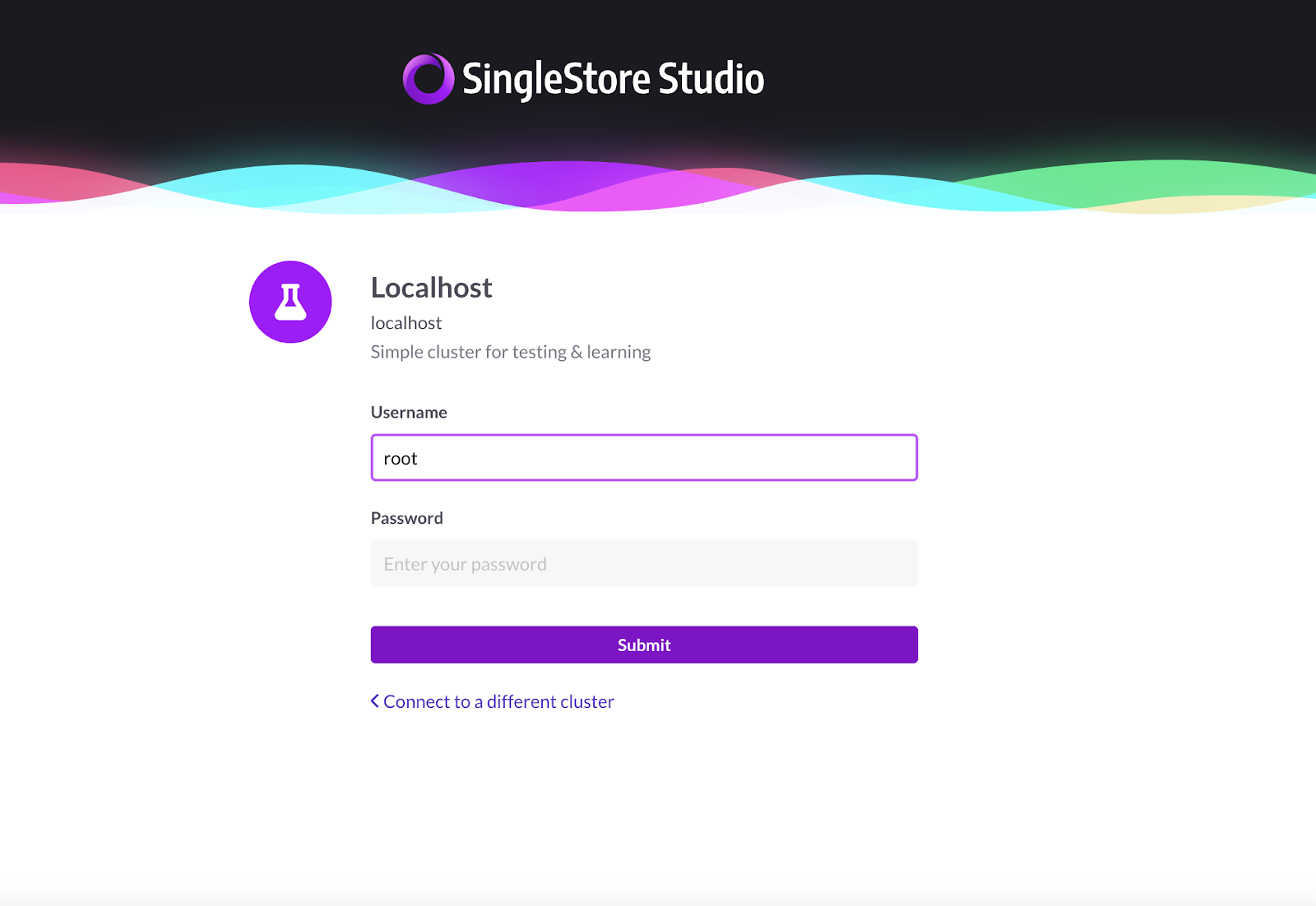

Keep note of both your license key and your root password. A random root password will be assigned to your account, but you’ll be able to change your root password using the SingleStore UI.

Getting Started

This tutorial depends on

The most important file in this repo is the docker-compose.yaml which describes a Docker environment with a SingleStore database (singlestore), a MinIO instance (minio) and a mc container which depends on the MinIO service.

The mc container contains an entrypoint script that first waits until MinIO is accessible, adds MinIO as a host, creates the classic-books bucket, uploads a books.txt file containing book data, sets the bucket policy to public, and then exits.

version: '3.7'

services:

singlestore:

image: 'singlestore/cluster-in-a-box'

ports:

- "3306:3306"

- "8080:8080"

environment:

LICENSE_KEY: ""

ROOT_PASSWORD: ""

START_AFTER_INIT: 'Y'

minio:

image: minio/minio:latest

ports:

- "9000:9000"

- "9001:9001"

volumes:

- data1-1:/data1

- data1-2:/data2

environment:

MINIO_ROOT_USER: minioadmin

MINIO_ROOT_PASSWORD: minioadmin

command: ["server", "/data1", "/data2", "--console-address", ":9001"]

mc:

image: minio/mc:latest

depends_on:

- minio

entrypoint: >

/bin/sh -c "

until (/usr/bin/mc config host add --quiet --api s3v4 local http://minio:9000 minioadmin minioadmin) do echo '...waiting...' && sleep 1; done;

echo 'Title,Author,Year' > books.txt;

echo 'The Catcher in the Rye,J.D. Salinger,1945' >> books.txt;

echo 'Pride and Prejudice,Jane Austen,1813' >> books.txt;

echo 'Of Mice and Men,John Steinbeck,1937' >> books.txt;

echo 'Frankenstein,Mary Shelley,1818' >> books.txt;

/usr/bin/mc cp books.txt local/classic-books/books.txt;

/usr/bin/mc policy set public local/classic-books;

exit 0;

"

volumes:

data1-1:

data1-2:

Using a document editor, replace the placeholders with your license key and root password.

In a terminal window, navigate to where you cloned the repo and run the following command to start up all the containers:

docker-compose up

Open up a browser window navigate to

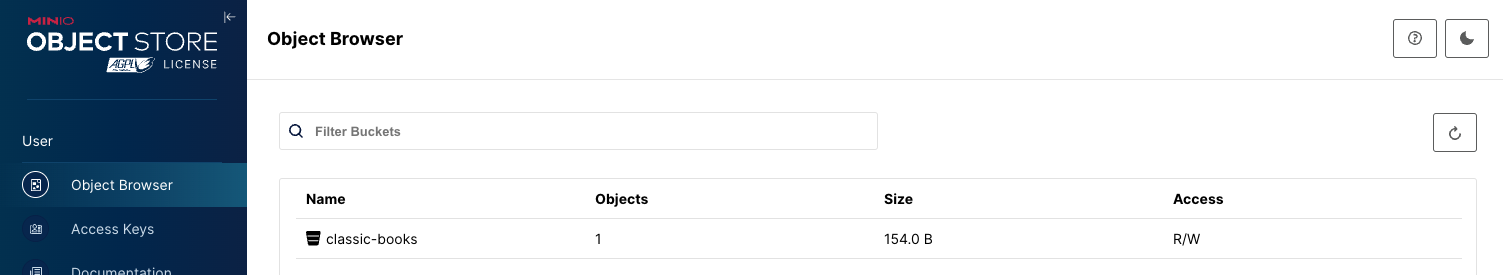

Check MinIO

Navigate to minioadmin:minioadmin. You’ll see that the mc container has made a bucket called classic-books and that there is one object in the bucket.

Explore with SQL

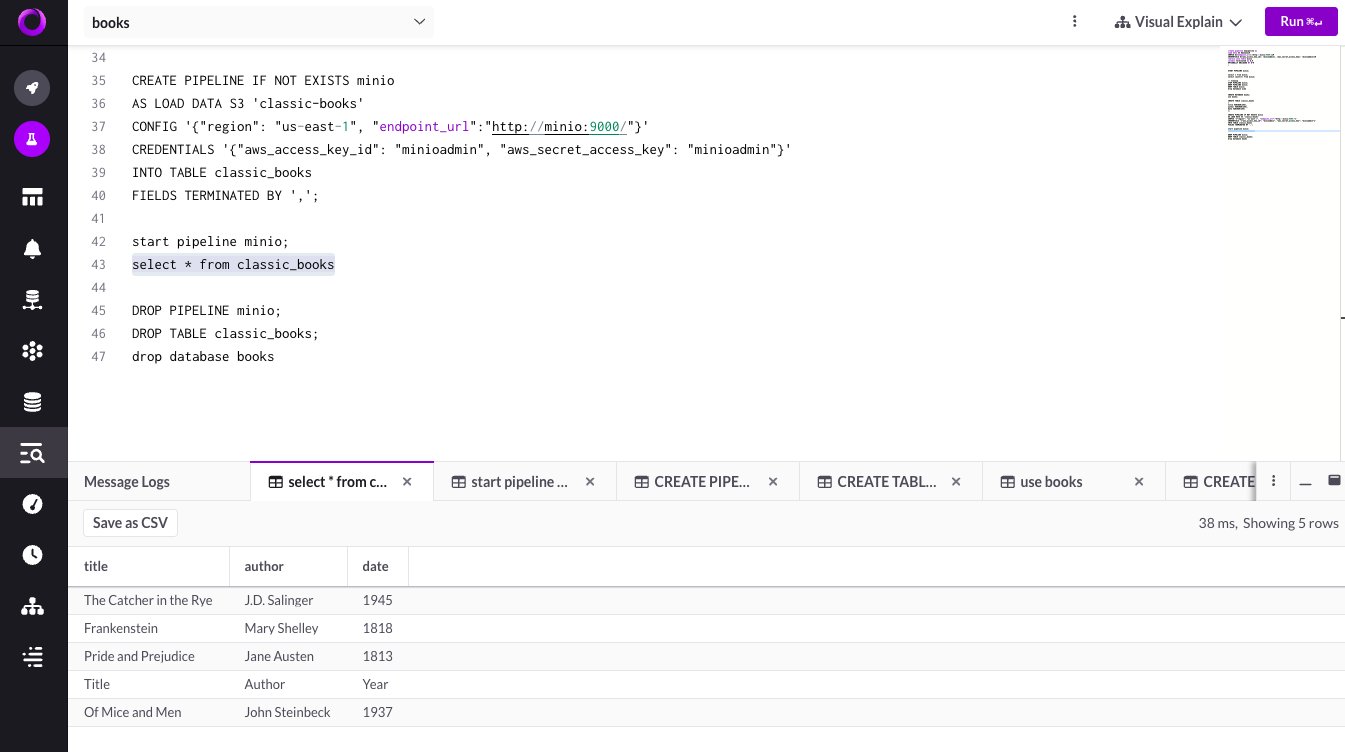

In SingleStore, navigate to the SQL Editor and run the following

-- Create a new database named 'books'

CREATE DATABASE books;

-- Switch to the 'books' database

USE books;

-- Create a table named 'classic_books' to store information about classic books

CREATE TABLE classic_books

(

title VARCHAR(255),

author VARCHAR(255),

date VARCHAR(255)

);

-- Define a pipeline named 'minio' to load data from an S3 bucket called 'classic-books'

-- The pipeline loads data into the 'classic_books' table

CREATE PIPELINE IF NOT EXISTS minio

AS LOAD DATA S3 'classic-books'

CONFIG '{"region": "us-east-1", "endpoint_url":"http://minio:9000/"}'

CREDENTIALS '{"aws_access_key_id": "minioadmin", "aws_secret_access_key": "minioadmin"}'

INTO TABLE classic_books

FIELDS TERMINATED BY ',';

-- Start the 'minio' pipeline to initiate data loading

START PIPELINE minio;

-- Retrieve and display all records from the 'classic_books' table

SELECT * FROM classic_books;

-- Drop the 'minio' pipeline to stop data loading

DROP PIPELINE minio;

-- Drop the 'classic_books' table to remove it from the database

DROP TABLE classic_books;

-- Drop the 'books' database to remove it entirely

DROP DATABASE books;

This SQL script initiates a sequence of actions to handle data related to classic books. It starts by establishing a new database named books. Within this database, a table called classic_books is created, designed to hold details such as title, author, and publication date.

Following this, a pipeline named minio is set up to extract data from an S3 bucket labeled classic-books and load it into the classic_books table. Configuration parameters for this pipeline, including region, endpoint URL, and authentication credentials, are defined.

Subsequently, the 'minio' pipeline is activated to commence the process of data retrieval and population. Once the data is successfully loaded into the table, a SELECT query retrieves and displays all records stored in classic_books.

Following the completion of data extraction and viewing, the minio pipeline is halted and removed, the classic_books table is dropped from the booksdatabase and the books database itself is removed, ensuring a clean slate and concluding the data management operations. This script should get you started playing around with data in MinIO in SingleStore.

Build on this Stack

This tutorial swiftly sets up a robust data stack that allows for experimentation with storing, processing, and querying data in object storage. The integration of SingleStore, a cloud-native database known for its speed and versatility, with MinIO forms an important brick in the modern datalake stack.

As the industry trend leans towards the disaggregation of storage and compute, this setup empowers developers to explore innovative data management strategies. Whether you're interested in building data-intensive applications, implementing advanced analytics, or experimenting with AI workloads, this tutorial serves as a launching pad.

We invite you to build upon this data stack, experiment with different datasets and configurations, and unleash the full potential of your data-driven applications. For any questions or ideas, feel free to reach out to us at hello@min.io or join our