Authors:

(1) Arindam Mitra;

(2) Luciano Del Corro, work done while at Microsoft;

(3) Shweti Mahajan, work done while at Microsoft;

(4) Andres Codas, denote equal contributions;

(5) Clarisse Simoes, denote equal contributions;

(6) Sahaj Agarwal;

(7) Xuxi Chen, work done while at Microsoft;;

(8) Anastasia Razdaibiedina, work done while at Microsoft;

(9) Erik Jones, work done while at Microsoft;

(10) Kriti Aggarwal, work done while at Microsoft;

(11) Hamid Palangi;

(12) Guoqing Zheng;

(13) Corby Rosset;

(14) Hamed Khanpour;

(15) Ahmed Awadall.

Table of Links

Teaching Orca 2 to be a Cautious Reasoner

B. BigBench-Hard Subtask Metrics

C. Evaluation of Grounding in Abstractive Summarization

F. Illustrative Example from Evaluation Benchmarks and Corresponding Model Outpu

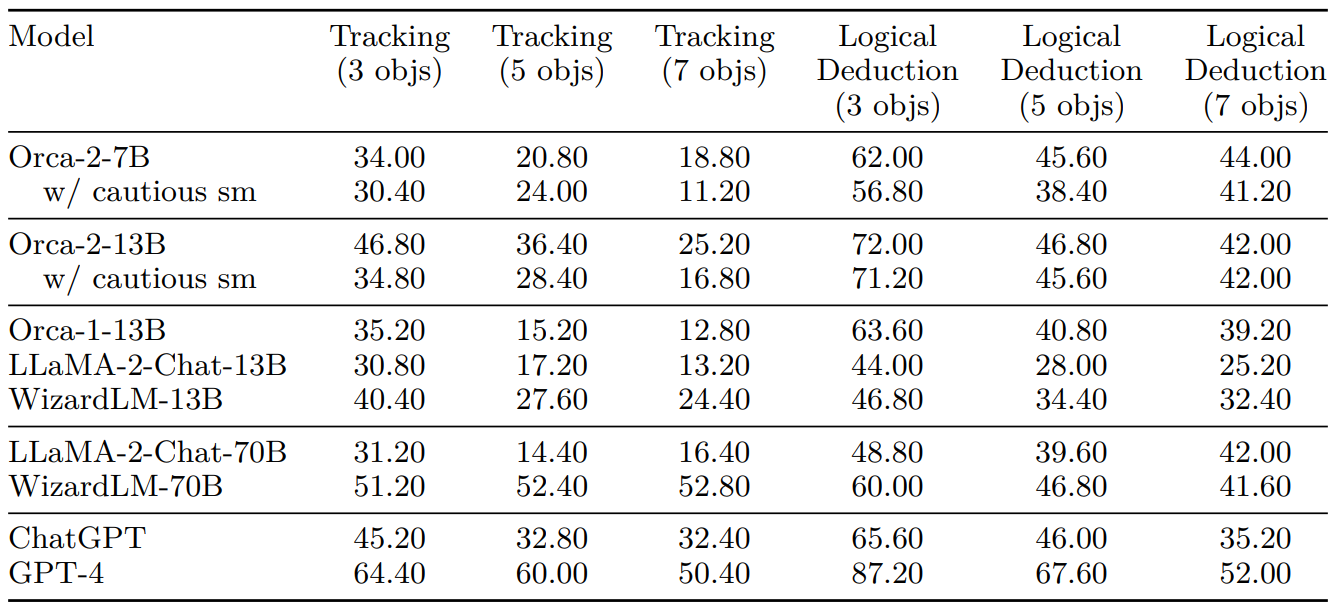

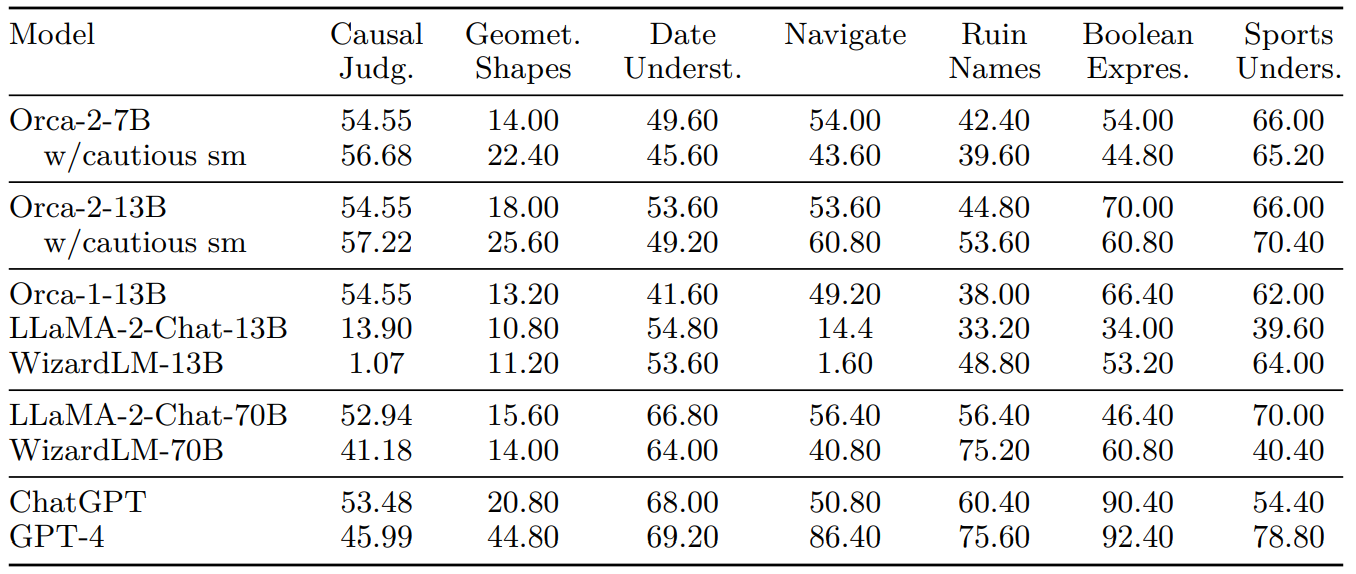

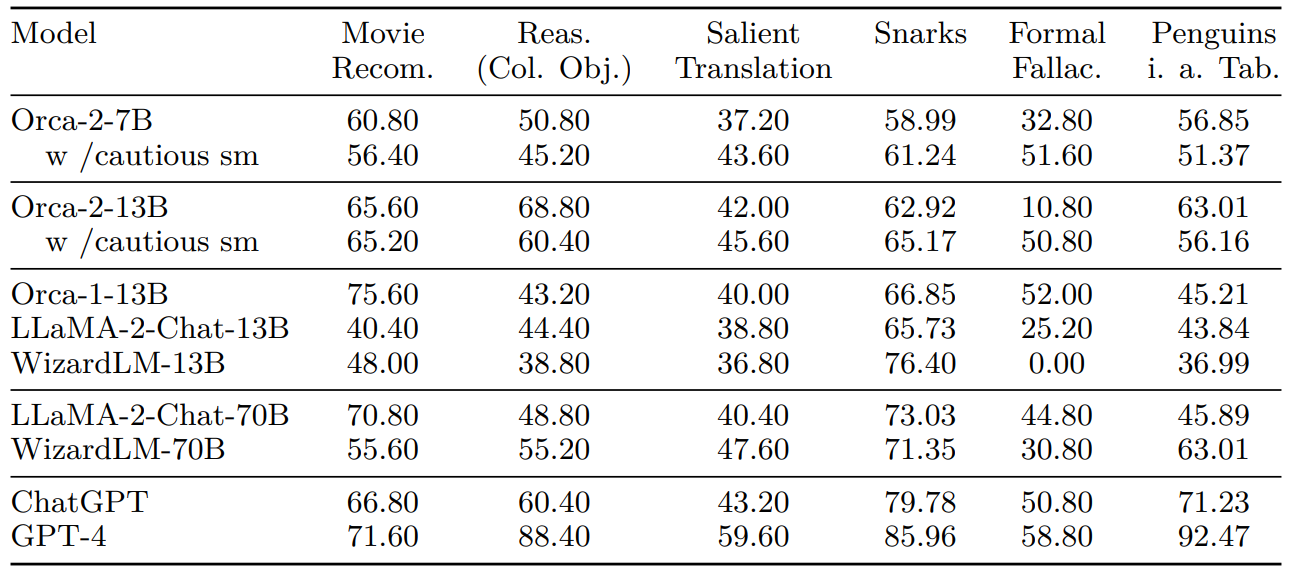

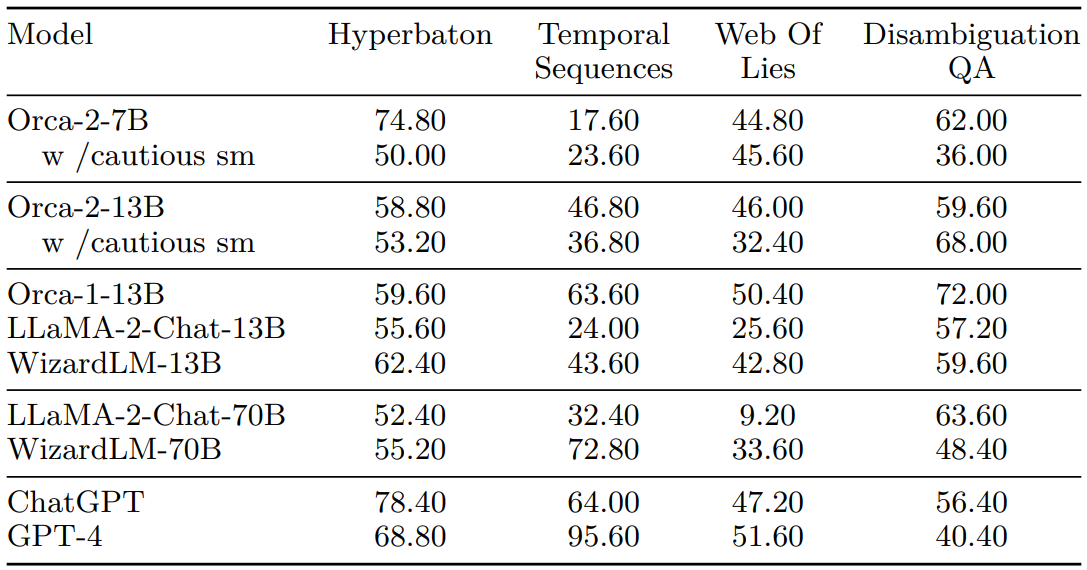

B BigBench-Hard Subtask Metrics

Table 7, 8, 9, and 10 showcase the zero-shot performance of Orca 2 and the baseline models on each BBH MCQ reasoning task, with accuracy being the metric used to evaluate performance.

This paper is available on arxiv under CC 4.0 license.