Table of Links

2 Background & Problem Statement

2.1 How can we use MLLMs for Diffusion Synthesis that Synergizes both sides?

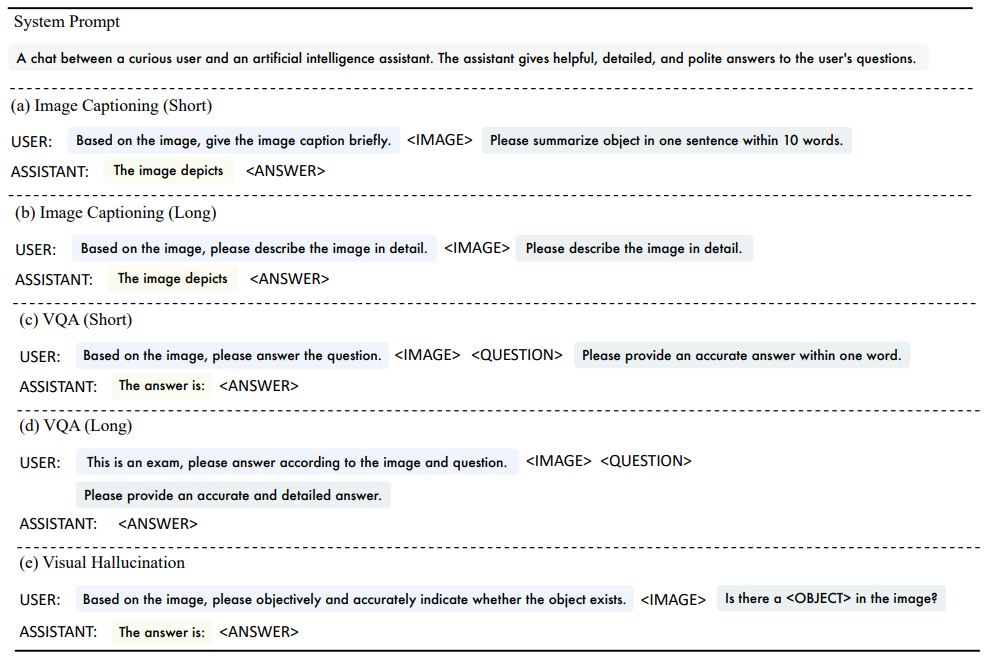

3.1 End-to-End Interleaved generative Pretraining (I-GPT)

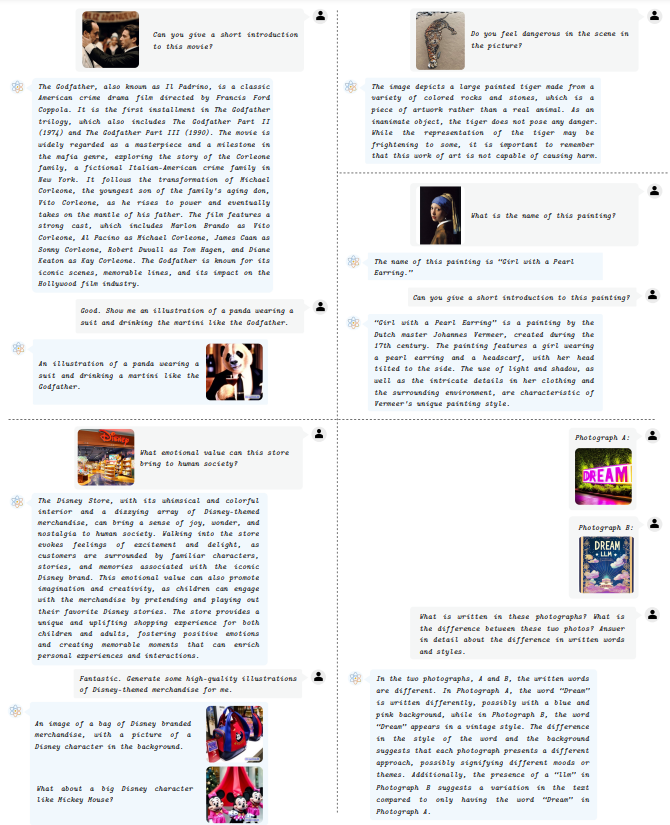

4 Experiments and 4.1 Multimodal Comprehension

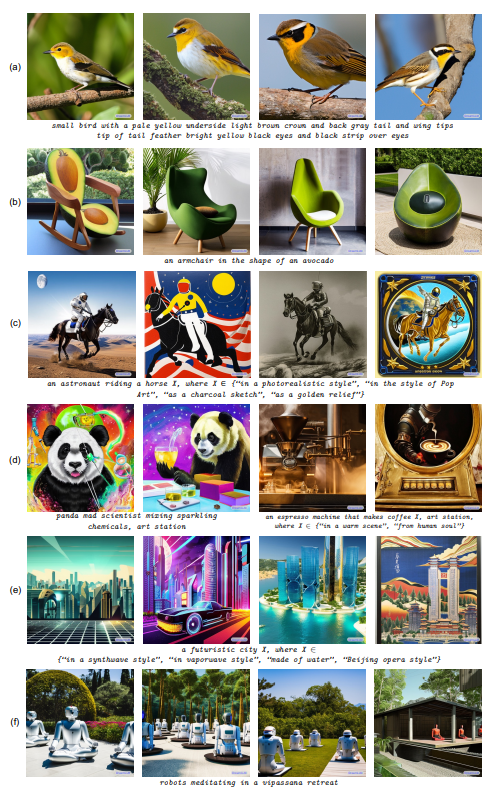

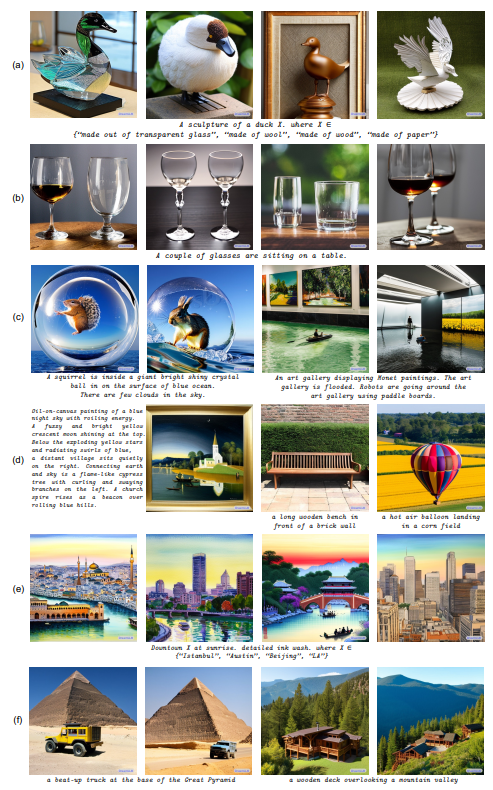

4.2 Text-Conditional Image Synthesis

4.3 Multimodal Joint Creation & Comprehension

5 Discussions

5.1 Synergy between creation & Comprehension?

5. 2 What is learned by DreamLLM?

B Additional Qualitative Examples

E Limitations, Failure Cases & Future Works

E LIMITATIONS, FAILURE CASES & FUTURE WORKS

Limitations While DREAMLLM has made significant strides toward the development of versatile, creative, and foundational MLLMs, it still has several limitations.

Model scale. The primary constraint pertains to the scale of the LLMs utilized. Current evaluations mainly employ 7B LLMs as the base model, and despite the impressive results garnered, the potential benefits of larger model sizes, such as 65B or 130B (Kaplan et al., 2020), are worth future exploration.

Training data. The second challenge relates to the quality and quantity of training data (Jia et al., 2021). As the model size and capabilities scale up, a corresponding increase in data is crucial. However, the procurement and refinement of high-quality training data present substantial logistical and financial hurdles. For instance, the open-source interleaved dataset MMC4 contains a significant amount of noise in the form of text and images, like commercial advertisements. This noise could adversely affect the model’s output language and image style.

Prompt sensitivity. The sensitivity of LLMs to human prompts is a known issue (Wei et al., 2022b; Wang et al., 2023b; Zhou et al., 2023), a challenge that extends to MLLMs. For instance, MLLMs’ propensity for detailed responses necessitates tailored prompting to elicit concise and short answers, which is particularly useful when addressing Visual Question Answering (VQA) tasks.

Failure Cases The main failure cases of DREAMLLM are observed for multiple image-based content creations. For instance, when presented with two images and a composite instruction such as “A and B”, DREAMLLM sometimes generates a single subject that amalgamates the characteristics of A and B. This output aligns more closely with the directive “A like B”.

This phenomenon is not unique to DREAMLLM, but is also observed in specialized compositional generation methodologies, such as Structure Diffusion (Feng et al., 2023; Chefer et al., 2023). This recurring issue may be attributed to the inherent complexity of compositional generation tasks, compounded by the severe scarcity of data specific to this domain.

Future Works As a simple and general multimodal learning framework, our future work aims to enhance the DREAMLLM framework by integrating fine-grained visual comprehension via methods like precise referring instruction tuning (Zhao et al., 2023a). We also plan to expand beyond visual and linguistic content comprehension and generation. Several promising research directions include:

• Exploring applications of in-context generation capabilities of DREAMLLM to complex tasks such as image-to-image translation (Isola et al., 2017; Zhang et al., 2023c; Zhang & Agrawala, 2023).

• Utilizing DREAMLLM’s context consistency feature for geometry-preserving tasks, including 3D content creation (Poole et al., 2023; Qi et al., 2023b; Liu et al., 2023b), representation learning (Dong et al., 2023; Qi et al., 2023a; Zhang et al., 2023a;e), scene comprehension (Zhang et al., 2023b; Hong et al., 2023), and embodied artificial inteligence (Ichter et al., 2022).

• Striving to achieve a unified multimodal zero-shot generalist by extending the scope to various modalities using techniques such as ImageBind (Girdhar et al., 2023) and exploring content creation models in other modalities like audio (Kong et al., 2021).

This paper is available on arxiv under CC BY-NC-ND 4.0 DEED license.

Authors:

(1) Runpei Dong, Xi’an Jiaotong University and Internship at MEGVII;

(2) Chunrui Han, MEGVII Technology;

(3) Yuang Peng, Tsinghua University and Internship at MEGVII;

(4) Zekun Qi, Xi’an Jiaotong University and Internship at MEGVII;

(5) Zheng Ge, MEGVII Technology;

(6) Jinrong Yang, HUST and Internship at MEGVII;

(7) Liang Zhao, MEGVII Technology;

(8) Jianjian Sun, MEGVII Technology;

(9) Hongyu Zhou, MEGVII Technology;

(10) Haoran Wei, MEGVII Technology;

(11) Xiangwen Kong, MEGVII Technology;

(12) Xiangyu Zhang, MEGVII Technology and a Project leader;

(13) Kaisheng Ma, Tsinghua University and a Corresponding author;

(14) Li Yi, Tsinghua University, a Corresponding authors and Project leader.